Introduction

Artificial Intelligence (AI) has been touted as the answer to a multitude of business challenges. However, AI – along with machine learning and large language models (LLMs) – is still fraught with technical and regulatory challenges as the technology evolves. Threat actors use AI to create deepfake videos, text, and audio; craft convincing phishing emails; bypass security measures; and automate malicious activities – prompting national and international security concerns. Companies are developing their own Generative AI (GenAI) models to improve efficiency and boost their bottom line. However, GenAI algorithms demand massive amounts of data to train the system, which means using vast datasets from diverse sources, resulting in privacy and copyright concerns over data collection. In response, governments are proposing and / or enacting new laws and regulations to prevent or mitigate harm that AI usage may cause. For example, new regulations in Europe are designed to protect fundamental rights, including privacy of consumers’ personal information, as well as other justice and ethics issues. While that may put the region at a competitive disadvantage due to increased reporting burdens on companies, it also clarifies obligations and reduces the burden of trying to harmonize varying rules. Despite such issues, companies seeking to build an AI framework need to realize that with more data comes more risk, and proper risk protocols should be in place to help ensure privacy, security, and consideration of the wider Environmental, Social, and Governance (ESG) policies that each organization has put into place.

Artificial Intelligence, Data & Digital Regulations: Risks

- Cyberattacks and malware powered by AI, potentially resulting in:

- Data breaches

- Theft of personal information or intellectual property

- Disruption of services

- Increased costs

- Data poisoning – when an attacker changes the behavior of a GenAI system through manipulation of its training data or process, potentially jeopardizing the reliability of that GenAI model

- Disinformation litigation – output based on biased data and hallucinations (incorrect or misleading results) may subject the operator to legal risks

- The EU’s Artificial Intelligence Act, which imposes significant responsibility and risk management requirements on companies that provide high-risk AI systems, such as:

- Critical infrastructure operations

- Automated insurance claims processing

- Credit scoring

- Systems for hiring or evaluating employees

- Data being used to train a company’s LLM may be covered by copyright and could lead to intellectual property (IP) litigation

- M&A transactions – when acquiring a company with an AI framework, the acquirer needs to ask:

- What data is being inherited?

- What type of vetting will be conducted?

- AI is expensive due to cybersecurity certification and enormous energy consumption

- Environmental impact of AI, due to factors such as:

- AI being housed in data centers that generate electronic waste containing hazardous substances

- Data centers relying on water during construction and later to cool electrical components

- Data centers requiring energy that often comes from burning fossil fuels

- Microchips used by AI needing rare earth elements that are not always mined according to ESG standards

- Not building an AI system may mean losing a competitive advantage – conversely, putting an AI product out too quickly may open up a whole new vector of vulnerabilities for cyberattacks

- Ethical problems with AI – the technology can be used to spread disinformation and create deepfakes and other synthetic media that could result in unintended plagiarism or produce false or abusive content

The EU Artificial Intelligence Act Classifies AI According to Its Risk

- Unacceptable risk AI systems are prohibited in Article 5 of the Act

- This includes social scoring systems; use of an AI system that deploys subliminal techniques beyond a person’s consciousness or purposefully manipulative or deceptive techniques; use of an AI system that creates or expands facial recognition databases through the untargeted scraping of facial images from the internet or CCTV footage; and the use of biometric categorization systems to infer race, political opinions, religious beliefs, etc.

- Companies involved in prohibited AI systems face fines of up to EUR 35 million or 7% of global turnover

- Limited risk AI systems are subject to lighter transparency obligations

- Developers and deployers in this category must ensure end-users are aware they are interacting with AI (chatbots and deepfakes)

- Violations may result in fines of EUR 15 million or 3% of turnover

- Minimal risk AI systems, such as AI-enabled video games and spam filters, are unregulated

Artificial Intelligence, Data & Digital Regulations: Opportunities

- Speed of processing vast amounts of data and analyzing it quickly (i.e., automating repetitive tasks) is enabling organizational efficiency across industries

- The use of AI for enhanced fraud detection by identifying patterns and anomalies in financial data is leading to faster response by cybersecurity, financial crime, and corporate investigations teams

- Insurance policies are on the rise for AI risks covering data poisoning, usage rights infringements, and violations of regulations such as the EU’s AI Act

- AI in legal technology – for legal research, contract management, writing assistance, eDiscovery in litigation – is creating cost efficiencies by replacing human effort with AI computing

- Larger AI companies are teaming with the nuclear energy sector to use small modular reactors (SMRs) to fulfill power needs for their massive data centers

- Increased employment for people who can vet or review any final AI-generated product

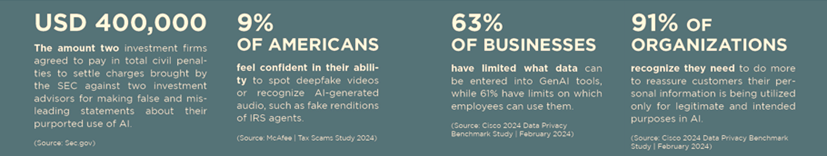

Supporting Statistics